|

I received my PhD in Electrical & Computer Engineering from UIUC, advised by Deepak Vasisht. Previously, I completed my BS in Computer Engineering at UIUC with highest honors. My research interests include ubiquitous computing, sensing, robotics, and health. My work was generously supported by grants from the NSF and USDA. I am fortunate to be a recipient of the Yuen T. Lo Outstanding Research Award, Rambus Computer Engineering Fellowship, Mavis Future Faculty Fellowship, and a Qualcomm Innovation Fellowship Finalist. Email / Github / Google Scholar / LinkedIn |

|

|

|

|

|

Ishani Janveja, Jida Zhang, Emerson Sie, Deepak Vasisht MobiSys 2026 abstract / bibtex / pdf / code / data 3D localization of transmitters in low orbits is an important emerging problem for many applications such as effective spectrum management, orbital safety, network operations, and the broader goals of space situational awareness. We present StarLoc — a system to geolocate transmitters in space using a combination of orbital modeling and a new interferometric 3D angle-of-arrival estimation technique. StarLoc's design relies on a unique insight — the motion of satellites is governed by orbital dynamics and is therefore along a 2D manifold in a 3D space. This reduces the degrees of freedom in satellite motion and allows us to 3D—locate and track a satellite with just three antennas in a 2D plane. We evaluate StarLoc using signal transmissions from 81 Starlink satellites. Our results show that StarLoc can estimate the 3D-angle of a satellite within 0.7° and orbital range within 5 km. Locate LEO satellites within 0.7° and orbital range within 5 km with just three antennas! |

|

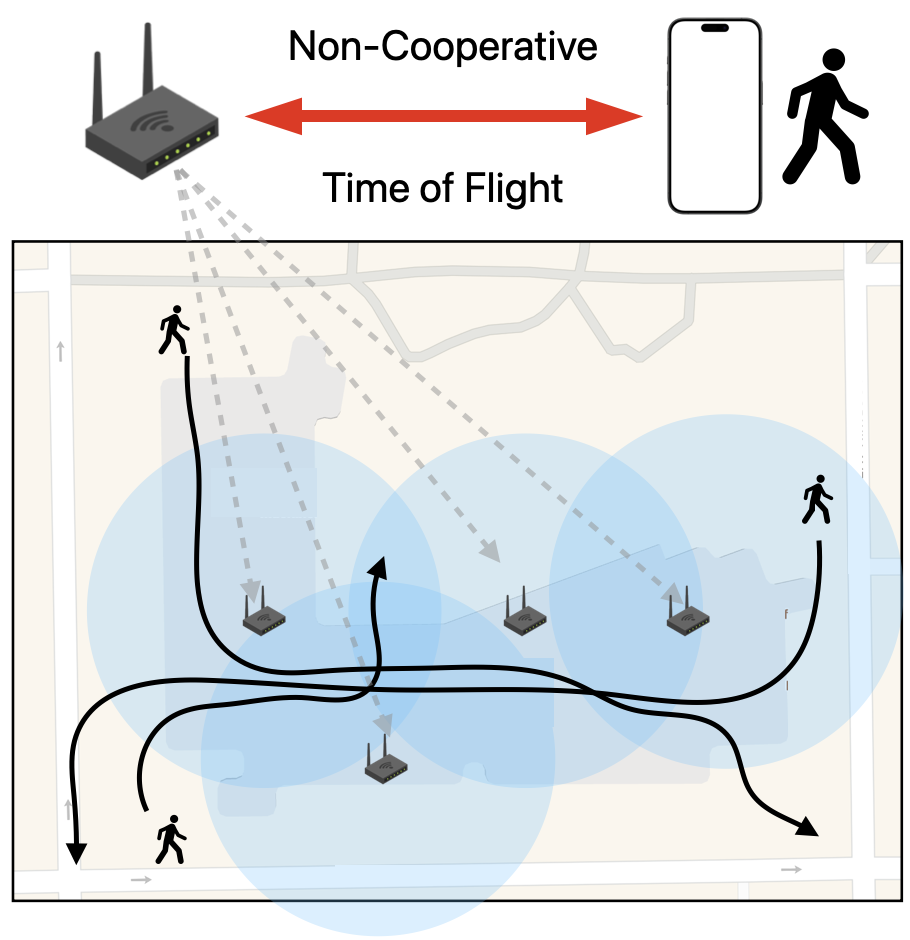

Enguang Fan*, Emerson Sie*, Federico Cifuentes-Urtubey, Deepak Vasisht MobiCom 2025 Best Poster Runner Up abstract / bibtex / pdf Accurate, ubiquitous indoor localization has long been a central goal in wireless systems, yet most proposed methods remain impractical for large-scale deployment. We present PeepLoc, a scalable Wi-Fi-based system that leverages existing infrastructure and unmodified mobile devices. PeepLoc operates in any indoor space with standards-compliant Wi-Fi APs and regular pedestrian traffic. It combines (a) extracting non-cooperative time-of-flight (ToF) from any AP, and (b) a crowdsourced bootstrapping approach using pedestrian dead reckoning (PDR) to localize APs as anchors. Implemented on commodity hardware, PeepLoc is evaluated across four buildings, achieving 3.41m mean and 3.06m median error, outperforming commercial indoor localization systems and approaching GPS-level accuracy outdoors. Crowdsourcing GPS-anchored PDR trajectories and non-cooperative Wi-Fi ranging data on commodity smartphones allows bootstrapping Wi-Fi based indoor positioning systems with competitive performance to outdoor GPS. |

|

|

Emerson Sie, Xinyu Wu, Heyu Guo, Deepak Vasisht MobiSys 2024 abstract / bibtex / website / arXiv / pdf code Star / ROS node Star / data Millimeter-wave (mmWave) radar is increasingly being considered as an alternative to optical sensors for robotic primitives like simultaneous localization and mapping (SLAM). While mmWave radar overcomes some limitations of optical sensors, such as occlusions, poor lighting conditions, and privacy concerns, it also faces unique challenges, such as missed obstacles due to specular reflections or fake objects due to multipath. To address these challenges, we propose Radarize, a self-contained SLAM pipeline that uses only a commodity single-chip mmWave radar. Our radar-native approach uses techniques such as Doppler shift-based odometry and multipath artifact suppression to improve performance. We evaluate our method on a large dataset of 146 trajectories spanning 4 buildings and mounted on 3 different platforms, totaling approximately 4.7 Km of travel distance. Our results show that our method outperforms state-of-the-art radar and radar-inertial approaches by approximately 5x in terms of odometry and 8x in terms of end-to-end SLAM, as measured by absolute trajectory error (ATE), without the need for additional sensors such as IMUs or wheel encoders. author = {Sie, Emerson and Wu, Xinyu and Guo, Heyu and Vasisht, Deepak}, title = {Radarize: Enhancing Radar SLAM with Generalizable Doppler-Based Odometry}, booktitle = {The 22nd ACM International Conference on Mobile Systems, Applications, and Services (ACM MobiSys '24)} year = {2024}, doi = {10.1145/3643832.3661871}, } Doppler flow has low translational drift, allowing us to map large-scale indoor environments with radar on diverse platforms. |

|

|

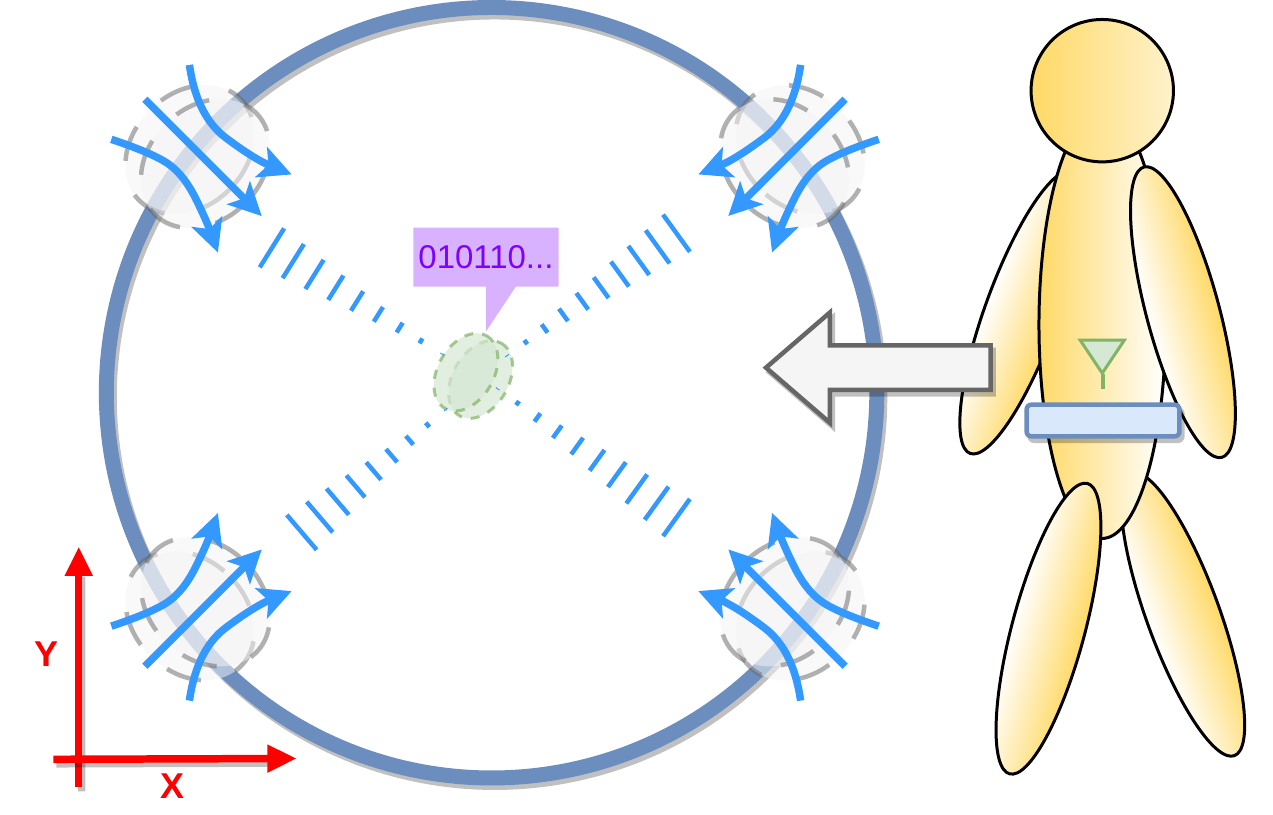

Emerson Sie, Zikun Liu, Deepak Vasisht MobiCom 2023 abstract / bibtex / website / arXiv / pdf / slides / code / data Unmanned aerial vehicles (UAVs) rely on optical sensors such as cameras and lidar for autonomous operation. However, optical sensors fail under bad lighting, are occluded by debris and adverse weather conditions, struggle in featureless environments, and easily miss transparent surfaces and thin obstacles. In this paper, we question the extent to which optical sensors are sufficient or even necessary for full UAV autonomy. Specifically, we ask: can UAVs autonomously fly without seeing? We present BatMobility, a lightweight mmWave radar-only perception system for autonomous UAVs that completely eliminates the need for any optical sensors. BatMobility enables vision-free autonomy through two key functionalities – radio flow estimation (a novel FMCW radar-based alternative for optical flow based on surface-parallel doppler shift) and radar-based collision avoidance. We build BatMobility using inexpensive commodity sensors and deploy it as a real-time system on a small off-the-shelf quadcopter, showing its compatibility with existing flight controllers. Surprisingly, our evaluation shows that BatMobility achieves comparable or better performance than commercial-grade optical sensors across a wide range of scenarios. author = {Emerson Sie and Zikun Liu and Deepak Vasisht}, title = {BatMobility: Towards Flying Without Seeing for Autonomous Drones}, booktitle = {The 29th Annual International Conference on Mobile Computing and Networking (ACM MobiCom '23)}, year = {2023}, doi = {10.1145/3570361.3592532}, isbn = {978-1-4503-9990-6/23/10}, } Doppler flow enables robust UAV autonomy in adverse environments lacking visual or geometric features. |

|

Bill Tao, Emerson Sie, Jayanth Shenoy, Deepak Vasisht MobiCom 2023 abstract / bibtex / pdf / slides Implantable and edible medical devices promise to provide continuous, more directed, and more comfortable healthcare treatments. Communicating with such devices and localizing them is a fundamental, but challenging, mobile networking problem. Recent work has focused on leveraging nearfield magnetism-based systems to avoid the challenges of attenuation, refraction, and reflection experienced by radio waves. However, these systems suffer from limited range, and require fingerprinting-based localization techniques. We present InnerCompass, a magnetic backscatter system for in-body communication and localization. We present new magnetism-native design insights that enhance the range of these devices. We also design the first analytical model for magnetic-field based localization, that generalizes across different scenarios. We’ve implemented InnerCompass and evaluated it in porcine tissue. Our results show that InnerCompass can communicate at 5 Kbps at a distance of 25 cm, and localize with an accuracy of 5 mm author = {Bill Tao and Emerson Sie and Jayanth Shenoy and Deepak Vasisht}, title = {Magnetic Backscatter for In-body Communication and Localization}, booktitle = {The 29th Annual International Conference on Mobile Computing and Networking (ACM MobiCom '23)}, year = {2023}, doi = {10.1145/3570361.3613301}, isbn = {978-1-4503-9990-6/23/10}, } Magnetic fields are unaffected by human bodies, making them ideal for in-body communication and localization. |

|

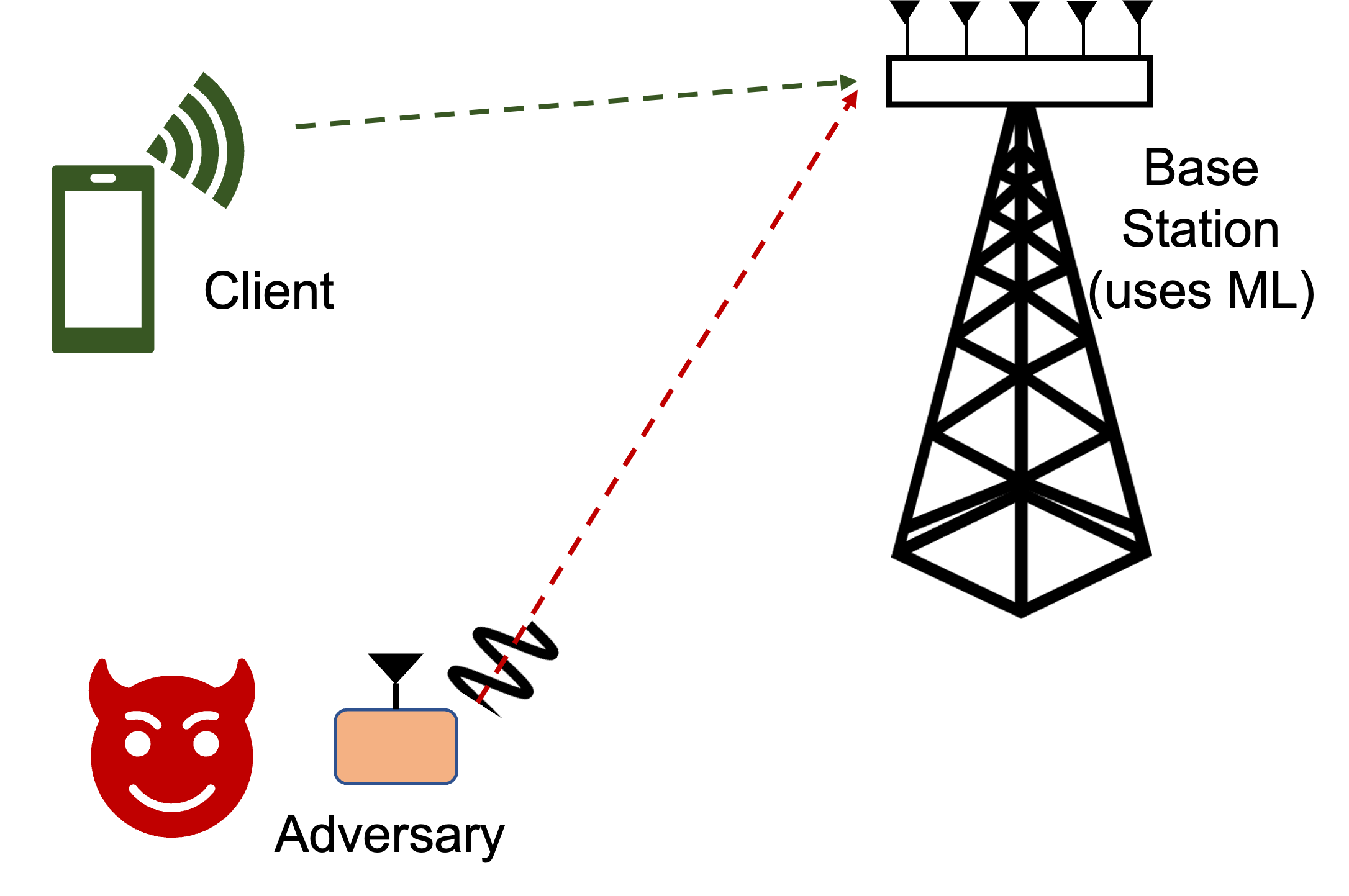

Zikun Liu, Changming Xu, Emerson Sie, Gagandeep Singh, Deepak Vasisht NSDI 2023 abstract / bibtex / pdf / talk Machine Learning (ML) is an increasingly popular tool for designing wireless systems, both for communication and sensing applications. We design and evaluate the impact of practically feasible adversarial attacks against such ML-based wireless systems. In doing so, we solve challenges that are unique to the wireless domain: lack of synchronization between a benign device and the adversarial device, and the effects of the wireless channel on adversarial noise. We build, RAFA (RAdio Frequency Attack), the first hardware-implemented adversarial attack platform against ML-based wireless systems and evaluate it against two state-of-the-art communication and sensing approaches at the physical layer. Our results show that both these systems experience a significant performance drop in response to the adversarial attack. author = {Zikun Liu and Changming Xu and Emerson Sie and Gagandeep Singh and Deepak Vasisht}, title = {Exploring Practical Vulnerabilities of Machine Learning-based Wireless Systems}, booktitle = {20th USENIX Symposium on Networked Systems Design and Implementation (NSDI 23)}, year = {2023}, isbn = {978-1-939133-33-5}, address = {Boston, MA}, pages = {1801--1817}, url = {https://www.usenix.org/conference/nsdi23/presentation/liu-zikun}, publisher = {USENIX Association}, month = apr,} We demonstrate real adversarial attacks on 5G machine-learned systems. |

|

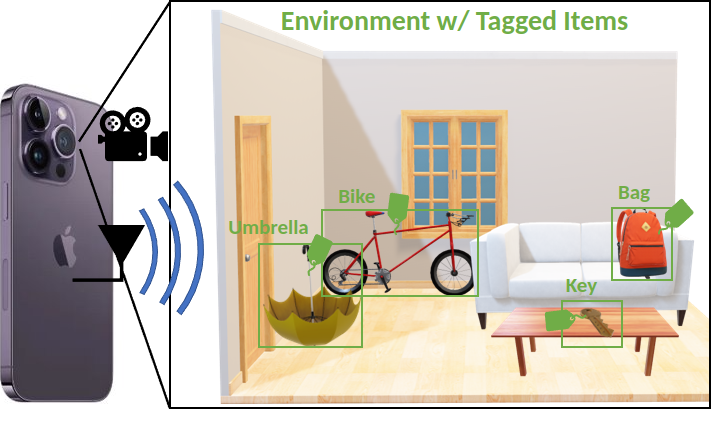

Emerson Sie, Deepak Vasisht ICRA 2022 abstract / bibtex / arXiv / pdf/ slides Wireless tags are increasingly used to track and identify common items of interest such as retail goods, food, medicine, clothing, books, documents, keys, equipment, and more. At the same time, there is a need for labelled visual data featuring such items for the purpose of training object detection and recognition models for robots operating in homes, warehouses, stores, libraries, pharmacies, and so on. In this paper, we ask: can we leverage the tracking and identification capabilities of such tags as a basis for a large-scale automatic image annotation system for robotic perception tasks? We present RF-Annotate, a pipeline for autonomous pixel-wise image annotation which enables robots to collect labelled visual data of objects of interest as they encounter them within their environment. Our pipeline uses unmodified commodity RFID readers and RGB-D cameras, and exploits arbitrary small-scale motions afforded by mobile robotic platforms to spatially map RFIDs to corresponding objects in the scene. Our only assumption is that the objects of interest within the environment are pre-tagged with inexpensive battery-free RFIDs costing 3–15 cents each. We demonstrate the efficacy of our pipeline on several RGB-D sequences of tabletop scenes featuring common objects in a variety of indoor environments. author={Sie, Emerson and Vasisht, Deepak}, booktitle={2022 International Conference on Robotics and Automation (ICRA)}, title={RF-Annotate: Automatic RF-Supervised Image Annotation of Common Objects in Context}, year={2022}, volume={}, number={}, pages={2590-2596}, doi={10.1109/ICRA46639.2022.9812072}} We describe a simple method to automate image annotation of objects in real world environments tagged with proximity-based RF trackers (i.e. RFIDs, AirTags, etc). |